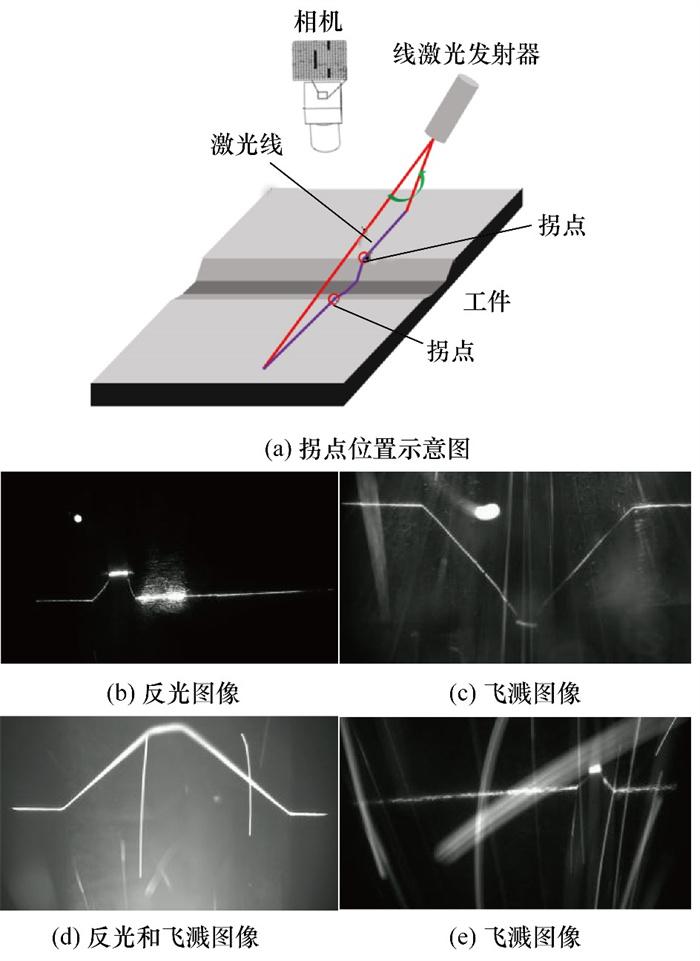

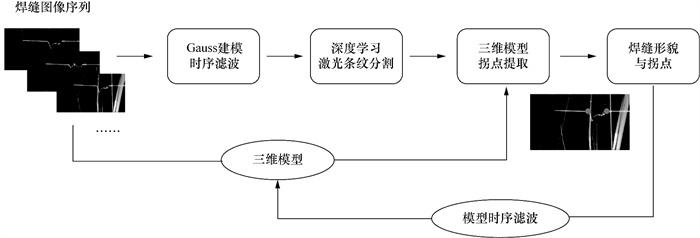

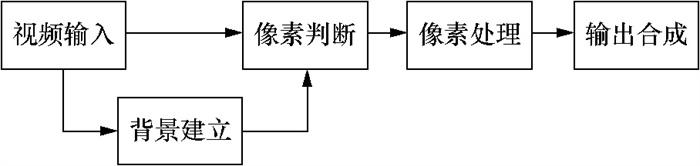

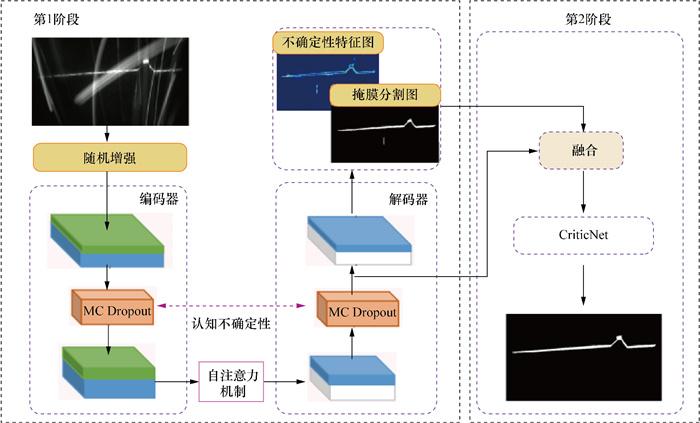

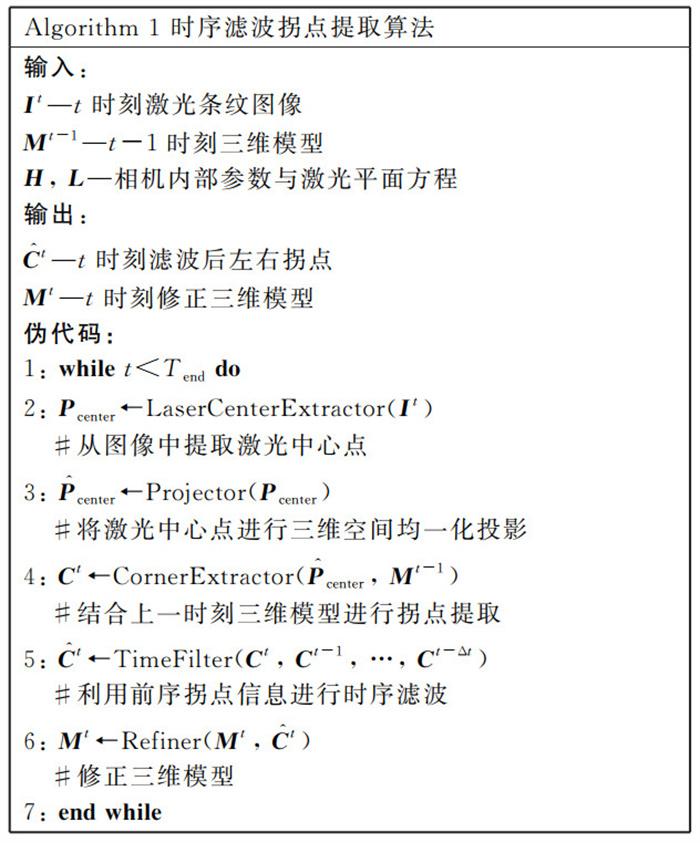

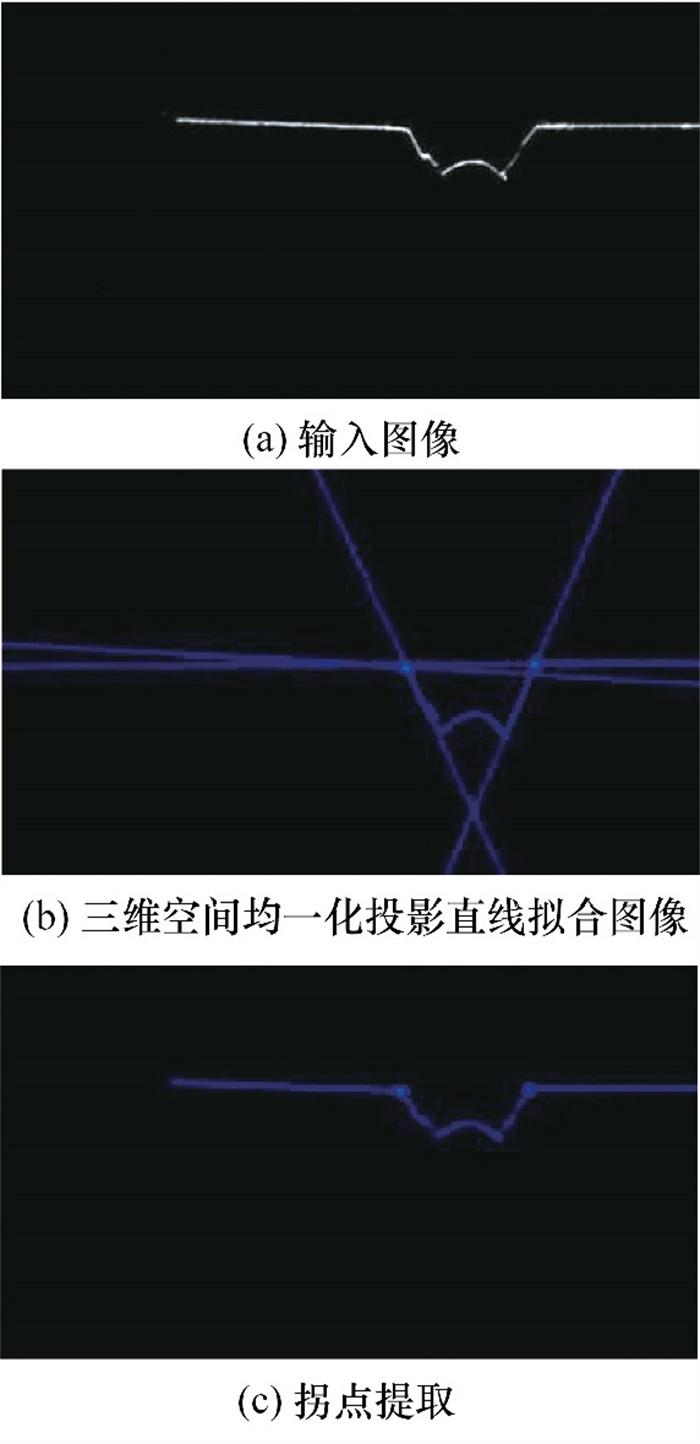

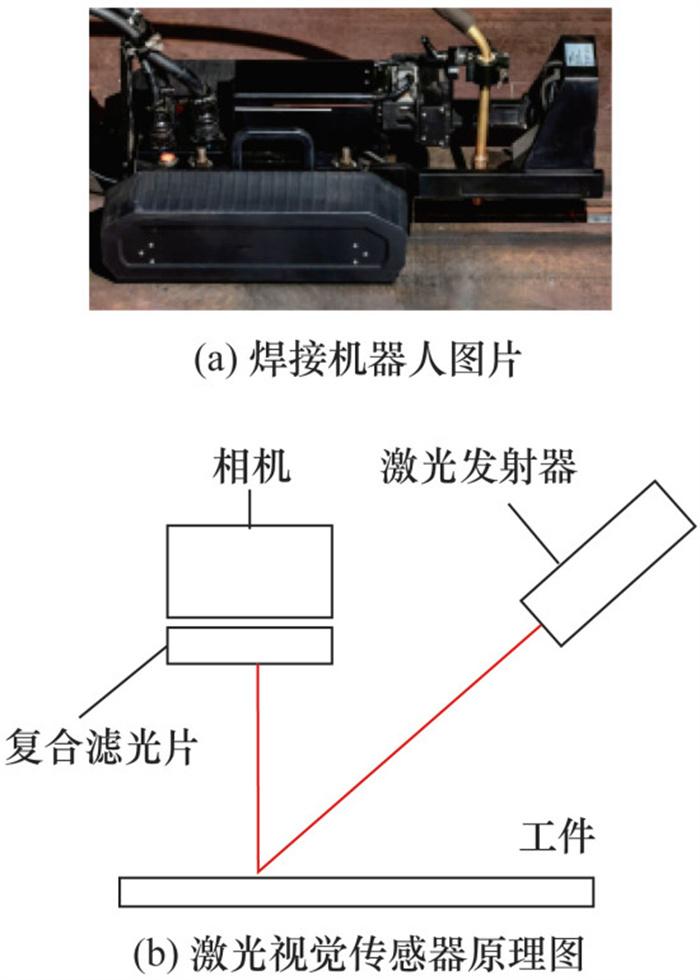

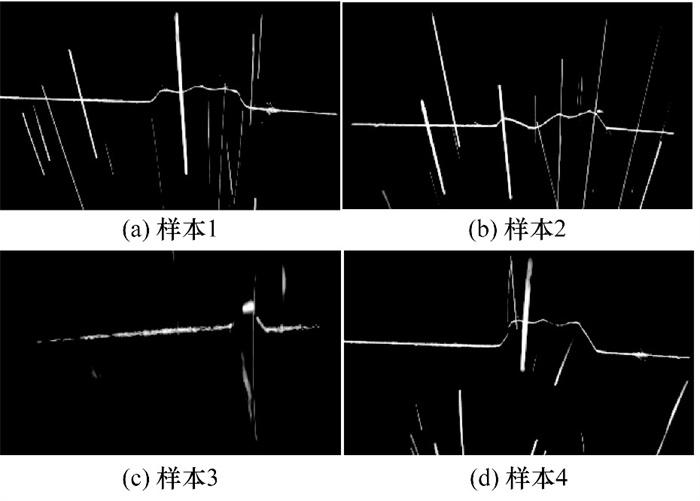

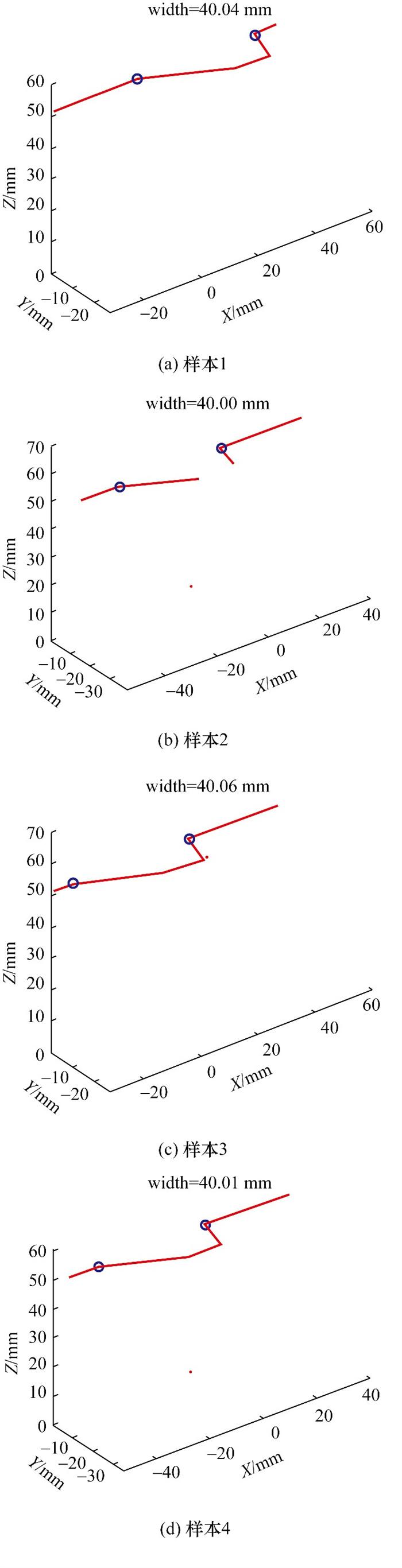

Objective: Welding remains a key process in modern manufacturing, but it still faces considerable automation challenges due to the complex and noisy welding environments. Accurate weld seam geometry detection is critical for robotic seam tracking and welding quality assurance. In particular, detecting inflection points on the weld seam—typically corresponding to groove edges—is crucial for trajectory planning and control of welding robots. However, intense arc light, dynamic spatter, and reflective interference often contribute to image quality degradation, complicating the robust extraction of seam features. To address these challenges, this paper proposes a comprehensive framework for the three-dimensional (3D) reconstruction and inflection point detection of weld seams in complex environments, seeking to enhance noise robustness, spatial accuracy, and real-time performance in intelligent welding systems. Methods: The proposed method comprises the following three sequential modules: temporal denoising, laser stripe segmentation, and 3D inflection point detection. First, a Gaussian background modeling-based temporal filtering algorithm is developed to capture frame-wise variations and suppress transient noise, such as welding spatter. This algorithm adaptively classifies pixels as foreground or background using statistical thresholds, thereby enhancing the signal-to-noise ratio. Second, a lightweight deep segmentation model incorporating uncertainty modeling and nonlocal self-attention is introduced. This model employs a dual-stage architecture, i.e., an initial U-Net with attention to coarse segmentation, followed by a CriticNet-enhanced refinement stage guided by epistemic uncertainty maps. This strategy ensures continuity in stripe detection and robustness against weak exposure or partial occlusions. Finally, the segmented laser stripe centerlines are projected into 3D space based on calibrated structured-light principles. An optimal view normalization step ensures that the viewpoint is aligned vertically with the laser plane, and a hierarchical geometric model of the weld cross-section is created to assist in the localization of inflection points. To improve temporal consistency, a sliding-window filter smooths the extracted inflection trajectories, and a feedback loop updates the 3D model in real time, enhancing prediction stability. Results: The framework is tested on a custom dataset collected from real-world welding scenarios using a mobile welding robot equipped with a laser vision sensor. This dataset comprises over 21 000 frames captured under the following three representative conditions: nonuniform surfaces, intense reflection, and severe spatter. Quantitative evaluations demonstrate that the proposed method achieves a weld inflection point detection success rate of 78%, which is a 13.3% improvement over baseline methods. The 3D reconstruction accuracy remains within a submillimeter error margin (≤0.1 mm), with a maximum relative deviation of only 0.2%. Ablation studies indicate that each module—temporal filtering, deep segmentation, and 3D modeling—offers substantial contributions to overall performance improvement. Additionally, the segmentation model achieves a mean intersection over union (mIoU) of 83.5% and a recall rate of 88.1%, with only 0.67 million parameters, outperforming conventional U-Net and SegFormer baseline methods in accuracy and efficiency. Conclusions: This study introduces an effective and lightweight method to addressing the challenges of weld seam 3D reconstruction and inflection point detection in noisy environments. By combining temporal filtering, uncertainty-aware segmentation, and geometry-guided 3D analysis, the method demonstrates strong noise resilience and geometric accuracy. These results highlight its strong potential for real-time application in intelligent welding systems, supporting accurate seam tracking and robotic welding guidance in complex industrial environments.