1 信号模型

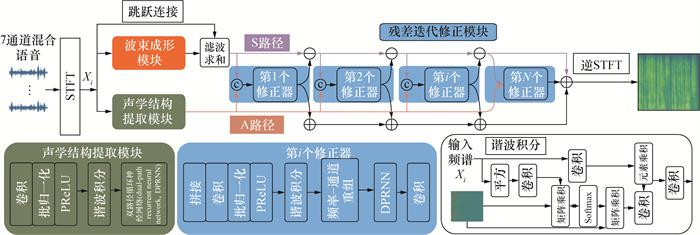

2 Harmonic BM

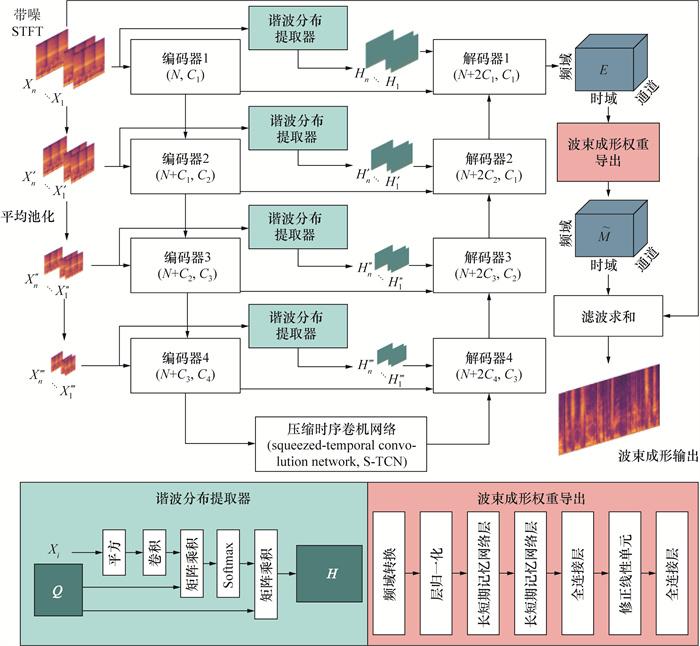

2.1 波束成形网络中的谐波分布提取器

2.2 多尺度加强频谱信息的波束成形

2.3 残差迭代修正模块

3 试验方法与基线

3.1 数据库

3.2 配置

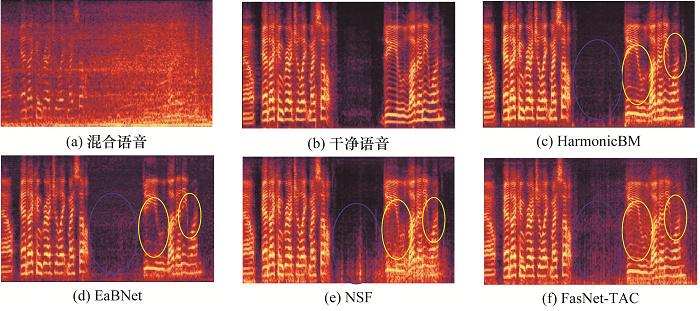

4 结果与分析

4.1 方法对比

表 1 Harmonic BM与其他基线方法对比 |

| 方法 | 参数量/106 | SNR | 均值 | |||

| -10 dB | -5 dB | -2 dB | 0 dB | |||

| Noisy | — | 1.41/0.35/-10.59 | 1.51/0.40/-5.65 | 1.60/0.43/-2.11 | 1.60/0.51/-0.42 | 1.53/0.42/-4.69 |

| NSF | 12.96 | 2.66/0.87/4.99 | 2.85/0.89/6.27 | 2.93/0.90/6.84 | 2.97/0.91/7.17 | 2.85/0.89/6.32 |

| FasNet-TAC | 2.76 | 2.37/0.85/5.32 | 2.67/0.89/7.53 | 2.81/0.90/8.40 | 2.90/0.91/8.91 | 2.69/0.89/7.54 |

| FT-JNF | 3.35 | 2.70/0.88/6.11 | 2.82/0.90/7.19 | 2.88/0.91/7.73 | 2.92/0.91/8.81 | 2.83/0.90/7.46 |

| EaBNet | 2.82 | 2.83/0.90/6.95 | 3.30/0.92/8.31 | 3.13/0.93/8.91 | 3.18/0.93/9.24 | 3.11/0.92/8.35 |

| TaEr | 6.03 | 2.84/0.91/7.23 | 3.28/0.92/8.82 | 3.31/0.92/9.45 | 3.40/0.93/9.98 | 3.20/0.92//8.87 |

| Oracle MVDR | — | 2.12/0.69/7.55 | 2.32/0.73/8.10 | 2.47/0.77/9.00 | 2.51/0.83/9.99 | 2.36/0.75/8.66 |

| Harmonic BM | 3.33 | 3.25/0.93/8.41 | 3.40/0.94/9.71 | 3.47/0.94/10.30 | 3.50/0.95/10.61 | 3.41/0.94/9.76 |

注:以上指标数据以PESQ∕STOI∕SI-SDR格式表示,数值越大表示语音质量越高。 |

表 2 Harmonic BM模型消融结果 |

| 模型状况 | 参数量/106 | PESQ | STOI | SI-SDR |

| 原模型 | 3.33 | 3.41 | 0.94 | 9.76 |

| 1 | 2.95 | 3.34 | 0.92 | 9.06 |

| 2 | 2.82 | 3.12 | 0.92 | 8.35 |

| 3 | 3.20 | 3.15 | 0.93 | 8.84 |